Bridge Structural Monitoring System

The collapse of the I-35W Mississippi River Bridge in Minneapolis, Minnesota on August 1, 2007 was a calamity of huge proportion. Carrying over 135,000 vehicles daily, the bridge failed during the evening rush hour resulting in thirteen fatalities and 145 injuries. Almost immediately plans were formulated for a replacement bridge, which subsequently opened on September 18, 2008. But unlike its ill-fated predecessor the new I-35W Saint Anthony Falls Bridge is designed with an integral state-of-the-art monitoring system that continuously assesses bridge integrity to ensure that a catastrophic failure will not repeat. That monitoring system was implemented using DATAQ Instruments' Distributed Ethernet data acquisition products, which provide the optimal blend of data acquisition power with the ability to capture measurements across the bridge's immense 1,200-foot length.

|

|

What to measure, and where to measure it?

Many requisite issues needed to be addressed by the data acquisition system that the Minnesota Department of Transportation (MDOT) deployed to monitor bridge vital signs. Chief among these was the need to establish a framework of sensor types and their specific location on the bridge to yield optimal diagnostics.

|

|

| Sensor | Measurement | Comments |

| Accelerometer | Vibration | Vertical Orientation |

| Linear Potentiometer | Distance | Expansion joint position and movement |

| Strain gage | Tensile and compressive load | Embedded in concrete, these transducers monitored the curing process and are dormant afterward. Re-activation at any future time is possible. |

Table 1 - The range of sensor types that are deployed in multiple locations on the I-35W Bridge.

This range of sensors needed to be deployed across the bridge's span in strategic structural locations for optimal results. For this purpose the bridge's 1,200-foot span was mapped into eight nodes. Each node can be thought of as a cluster of sensors, either of the same or different type. Table 2 describes how sensors are allocated between each of the eight nodes.| Node | Sensor | Sensitivities | Quantity |

| 1 | Accelerometer | 0.995 V/g | 2 |

| Linear potentiometer | 200 mV/inch | 4 | |

| 2 | Accelerometer | 1.007 V/g | 2 |

| 3 | Accelerometer | 1.007 V/g | 2 |

| Linear potentiometer | 200 mV/inch | 2 | |

| 4 | Accelerometer | 0.995 V/g | 2 |

| Linear potentiometer | 200 mV/inch | 2 | |

| 5 | Accelerometer | 1.004 and 0.995 V/g | 2 |

| Strain Gage | 5 mV full scale | 24 | |

| 6 | Accelerometer | 0.991 V/g | 10 |

| 7 | Accelerometer | 1.008 V/g | 4 |

| 8 | Accelerometer | 1.007 V/g | 2 |

| Linear potentiometer | 200 mV/inch | 4 |

|

Table 2 - Nodes were established on the bridge at strategic locations to ensure worst case measurements, and sensors with the indicated output levels were allocated to the nodes depending upon measurement requirements. |

To identify specific node locations the bridge is divided into northbound and southbound lanes, and major structural supports are denoted as abutments and piers. Beginning at the south end of the bridge and moving in a northbound direction, the order is abutment 1, pier 2, pier 3, pier 4, and abutment 5. Figure 3 provides a schematic view of the bridge that includes structural and node (Nx) locations as well as the distances between them. Nodes not located directly on a pier are placed at the centerline between structural supports (the theoretical weakest point) for a worst-case measurement.

|

|

Data acquisition challenges require unique solutions

Measurement nodes are sensor clusters of as many as twenty-six devices, and as you can see from Figure 3, the distances between measurement nodes can be great. Further, the plan was to establish a computer system in a blockhouse that is located approximately 340-feet from the northbound exit of the bridge. The computer would connect to a data acquisition system and provide 24/7/365 monitoring of bridge condition with remote access. This created two major data acquisition problems for the application that needed to be solved before the goal of a monitoring system for the I-35W Bridge could be realized. The total number of channels that needed to be acquired was sixty-two, which is not an overwhelming number given contemporary data acquisition solutions. However, running signal leads from sixty-two sensors to one central location is not practical. The optimal location for a single data acquisition system is at the middle of the bridge (at nodes 2 or 6), and as can be seen from Figure 3, this would result in signal paths that exceed 400 feet in some cases. The typical millivolt-level signals would experience line loss and induced noise over this distance that would degrade measurements to the point of making them useless for the purpose intended. Further, stringing shielded, multi-conductor signal cable over such a great distance is problematic and costly. For these reasons a single data acquisition approach was abandoned. A second option was to install a data acquisition system at each measurement node, resolving the problems created by one centralized data acquisition system. Signal cable lengths would be minimized, and connecting them to the data acquisition system over these short distances would be both practical and cost-effective. But the problem was how to synchronize eight data acquisition systems distributed across over 1,800 feet of northbound and southbound bridge segments. The need for channel synchronization is crucial to the success of subsequent data analysis and bridge performance interpretation. Each one of the sixty-two measured channels needs to be acquired in a predictable time slot relative to the others. Without this, an event on one channel cannot be interpreted in terms of other channels, so the effect of that event on the bridge's structure as a whole is lost forever. It would be like trying to interpret a verbal sentence where the words arrive at your ear in a different order than when they were spoken. Although the synchronization problem is inherently solved with one centralized data acquisition system, it's a major challenge to synchronize multiple systems spread out over 1,800 feet.

Synchronous Data Acquisition Using Ethernet

The ideal instrumentation solution for the I-35W Bridge allows one data acquisition system per node to maintain signal integrity and low cabling cost, and also provides the ability to synchronize between multiple systems that are dispersed across the eight measurement nodes with over 2,000 feet separating the data gathering computer and the last node. DATAQ Instruments' synchronous Ethernet data acquisition products provided such a solution. DATAQ Instruments' OEM type DI-5001 data acquisition cards were supplied with Ethernet interfaces, one per data acquisition node. Each board supports 32 analog channels to accommodate one sensor input per channel, and features dual RJ45 connectors that allow standard CAT-5 Ethernet cable to simply daisy chain between the eight DI-5001 boards, one per instrument node. This approach keeps transducer signal leads short, substituting low cost CAT-5 Ethernet cable for the long runs between nodes. The daisy chained Ethernet approach also solved other problems. It supports modularity and expansion so that nodes could be easily swapped, added or removed to increase or decrease node count, as the application requires. The fact that Ethernet is an isolated standard means that data communication reliability is bulletproof even in the presence of common mode voltages. Finally, it allows exceedingly long communication cable lengths of up to 100 meters between units. Since the data acquisition system's synchronous interface provides a built-in Ethernet switch, the distance between individual nodes, and between them and the block house can be as long as 100 meters. The synchronizing mechanism incorporates two unused CAT-5 cable pairs. One pair carries a master 16 MHz clock, and the second carries a trigger signal. The clock is daisy-chained between DI-5001 units, and the Ethernet interface of each unit incorporates a phase lock loop (PLL) that provides failsafe operation and exactly reproduces the master clock with zero phase delay. The failsafe feature is a unique aspect of the PLL that ensures that lock is maintained in the event of momentary, or even longer-term interruptions in the daisy-chained master clock. The incorporation of PLLs into the Ethernet interface of each DI-5001 ensures that each data acquisition unit remains precisely synced to the master clock in frequency as well as in phase, thereby guaranteeing synchronous analog to digital conversions between individual units and between individual channels. The addition of a master trigger signal on the second CAT-5 cable pair completes the picture to ensure that all units in the distributed chain initiate sampling at the same instant, unencumbered by network latencies. Referring again to Figure 3, in situations where distances between nodes or the computer exceed 100 meters, a relay is used to bridge the gap. Model DI-789 relays, denoted as Rx in Figure 3, are specially designed to carry data and synchronous timing signals between nodes and ultimately to the connected computer for display and storage.

Data Acquisition Software

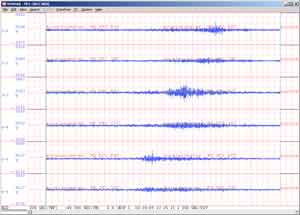

The transducers and the hardware used to achieve synchronous operation is only half of the solution. Also required was continuous monitoring of the bridge, 24/7/365. That meant a dedicated computer running software that is able to deliver a continuous stream of acquired data from each instrumentation node to the computer's hard drive for permanent storage. The result would be large data files that require an efficient method to review data. DATAQ Instruments' WinDaq software provided a solution that satisfied the need to acquire data continuously from each node though use of its disk streaming capability. Each channel of every node is continuously sampled, some as fast as 1 kHz, yielding approximately 2 GB data files each day after data reduction, and each value in the resulting data files is time and date stamped to correlate any given event with an actual date and time of occurrence. Reviewing data is fast and efficient through the use of WinDaq software's playback component. Specific events can be easily identified and exported to other applications for further analysis. Figure 4 is a compressed playback screen showing the northbound progression of a large vehicle across the bridge the afternoon of March 30, 2009. Starting at the bottom of the screen, moving toward the top, and correlating it with the bridge schematic of Figure 3, we can clearly see that the vehicle is first detected by node 3 accelerometers on the first bridge span (SP1, channels 6 and 5), then by node 2 accelerometers on the second bridge span (SP2, channels 4 and 3), and finally by accelerometers of node 1 on the third and final bridge span (SP3, channels 2 and 1).

|

|

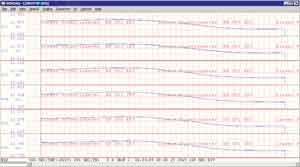

In another example, Figure 5 details the movement with temperature of three northbound and two southbound bridge expansion joints over a twenty-four hour period. The measurements were taken from several linear potentiometers (LPs) calibrated in inches. Beginning again at the bottom of the screen and moving up, first are the LPs of node 4 that measure the expansion of span 1 southbound lanes (SP1, channels 6 and 5). Next are node 4 LP sensors that measure complementary positions on the northbound lanes (SP1, channels 4 and 3). And finally node 1 LP sensors measuring expansion joint activity for northbound lanes at span 3 (SP3, channels 2 and 1).

|

|

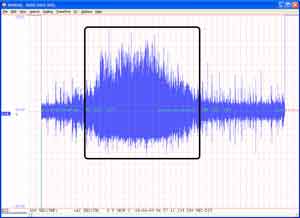

A final example of day-to-day activities on the I35W Bridge and the sensitivity of the instruments that detect them can be found in Figure 6. The havoc created by a snowplow at 7:32 AM on April 6, 2009, as detected by an accelerometer located at node 3 in the northbound lanes, can be seen in the enclosed box. Compare the enclosed section with the relative calm both before and after the plow passes in this highly compressed plot.

|

|

Conclusions

Constant monitoring of Saint Anthony Falls Bridge vital signs will provide unprecedented insight to bridge condition for the life of the structure. The evaluation of wear and tear no longer needs to be solely a subjective manual inspection exercise. Now it can be supplemented by quantitative analysis that evaluates bridge performance on an annual, monthly, and even daily basis if necessary, making manual modes of inspection that much more productive. Advanced data acquisitions systems are at the heart of this process. Due to the constraints of bridge length and the number and diversity of measurement points and types, only a synchronous data acquisition system could produce meaningful results. Ethernet data acquisition products by DATAQ Instruments fulfilled this requirement with hardware and software solutions that will generate multi-channel data files describing bridge condition far into the future.

View Cart

View Cart sales@dataq.com

sales@dataq.com 330-668-1444

330-668-1444 Figure 1 - An artist's conception of the new I35W St Anthony Falls Bridge (courtesy Minnesota Department of Transportation).

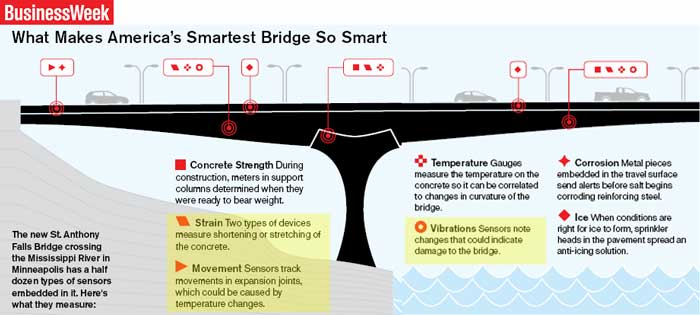

Figure 1 - An artist's conception of the new I35W St Anthony Falls Bridge (courtesy Minnesota Department of Transportation). Figure 2 - The I35W Saint Anthony Falls Bridge was featured in BusinessWeek magazine's Smart Infrastructure series in the March 2, 2009 issue. Highlighted in yellow are the measurements made by DATAQ Instruments data acquisition systems (

Figure 2 - The I35W Saint Anthony Falls Bridge was featured in BusinessWeek magazine's Smart Infrastructure series in the March 2, 2009 issue. Highlighted in yellow are the measurements made by DATAQ Instruments data acquisition systems ( Figure 3 - Schematic representation of the location of eight instrumentation nodes (Nx) and relays (Rx) used to monitor bridge health.

Figure 3 - Schematic representation of the location of eight instrumentation nodes (Nx) and relays (Rx) used to monitor bridge health.